Building a Modern DevOps Pipeline: From Code to Production

Building a Modern DevOps Pipeline: From Code to Production

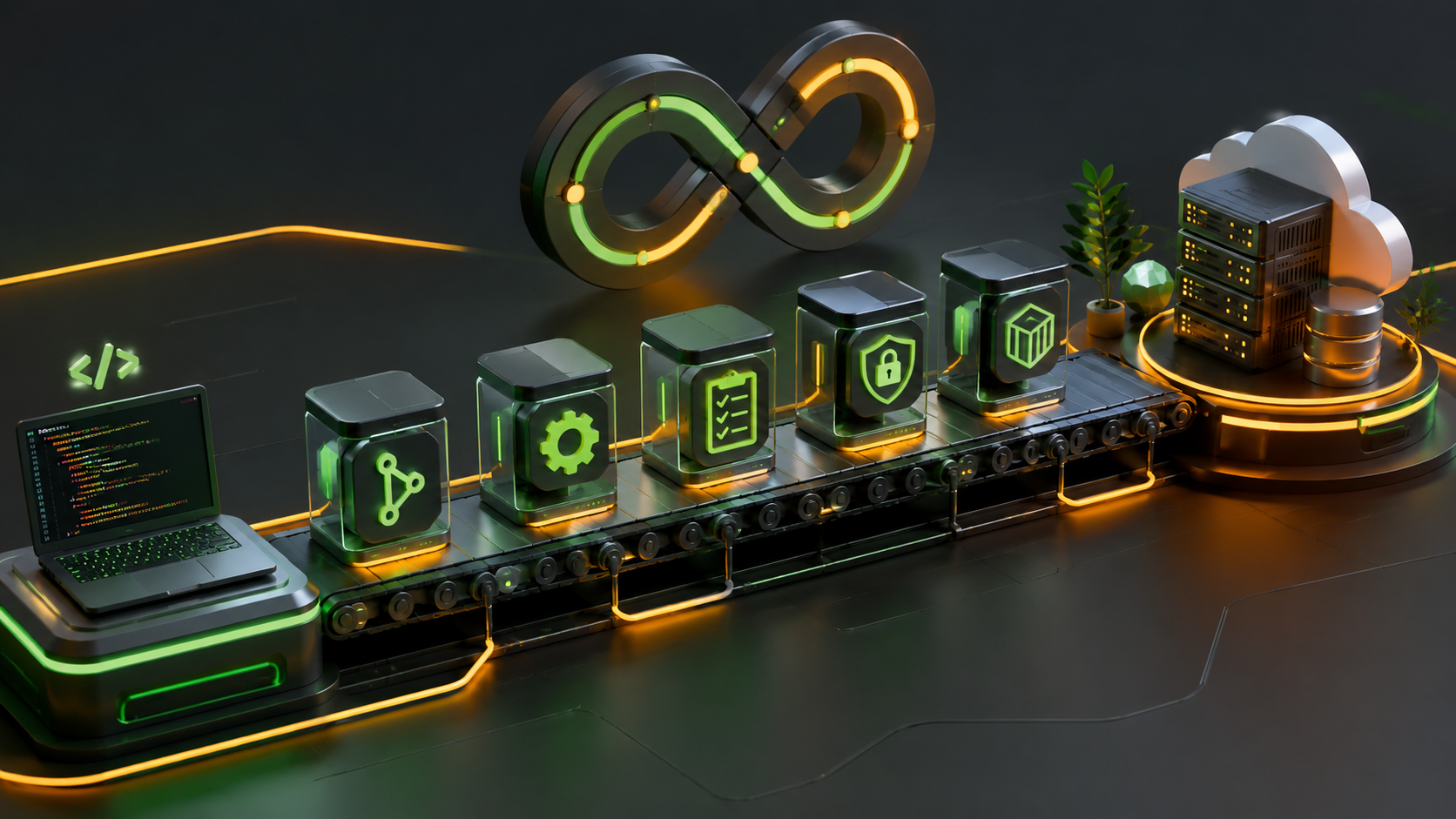

DevOps isn't just about tools—it's a cultural shift that bridges the gap between development and operations. In this deep dive, we'll explore how to build a robust DevOps pipeline that takes your code from a developer's laptop to production with confidence, speed, and reliability.

Understanding the DevOps Lifecycle

The DevOps lifecycle consists of several interconnected phases:

Plan → Code → Build → Test → Release → Deploy → Operate → Monitor

↑________________________________|Each phase has specific tools and practices:

- Plan: Jira, Trello, GitHub Issues

- Code: Git, GitHub, GitLab, Bitbucket

- Build: Jenkins, GitHub Actions, GitLab CI, CircleCI

- Test: Jest, Pytest, Selenium, JUnit

- Release: Semantic Versioning, Git Tags

- Deploy: Docker, Kubernetes, AWS ECS, Terraform

- Operate: Ansible, Puppet, Chef

- Monitor: Prometheus, Grafana, ELK Stack, DataDog

Setting Up Your CI/CD Pipeline

Continuous Integration and Continuous Deployment (CI/CD) is the backbone of modern DevOps. Let's build a production-ready pipeline using GitHub Actions.

GitHub Actions Workflow Example

name: Node.js CI/CD Pipeline

on:

push:

branches: [ main, develop ]

pull_request:

branches: [ main ]

env:

NODE_VERSION: '18.x'

DOCKER_REGISTRY: ghcr.io

IMAGE_NAME: ${{ github.repository }}

jobs:

# Job 1: Code Quality & Testing

test:

runs-on: ubuntu-latest

steps:

- name: Checkout code

uses: actions/checkout@v3

- name: Setup Node.js

uses: actions/setup-node@v3

with:

node-version: ${{ env.NODE_VERSION }}

cache: 'npm'

- name: Install dependencies

run: npm ci

- name: Run linter

run: npm run lint

- name: Run unit tests

run: npm run test:unit

- name: Run integration tests

run: npm run test:integration

- name: Generate coverage report

run: npm run test:coverage

- name: Upload coverage to Codecov

uses: codecov/codecov-action@v3

with:

token: ${{ secrets.CODECOV_TOKEN }}

# Job 2: Security Scanning

security:

runs-on: ubuntu-latest

needs: test

steps:

- name: Checkout code

uses: actions/checkout@v3

- name: Run Snyk security scan

uses: snyk/actions/node@master

env:

SNYK_TOKEN: ${{ secrets.SNYK_TOKEN }}

- name: Run npm audit

run: npm audit --audit-level=moderate

# Job 3: Build and Push Docker Image

build:

runs-on: ubuntu-latest

needs: [test, security]

if: github.ref == 'refs/heads/main'

steps:

- name: Checkout code

uses: actions/checkout@v3

- name: Set up Docker Buildx

uses: docker/setup-buildx-action@v2

- name: Log in to Container Registry

uses: docker/login-action@v2

with:

registry: ${{ env.DOCKER_REGISTRY }}

username: ${{ github.actor }}

password: ${{ secrets.GITHUB_TOKEN }}

- name: Extract metadata

id: meta

uses: docker/metadata-action@v4

with:

images: ${{ env.DOCKER_REGISTRY }}/${{ env.IMAGE_NAME }}

tags: |

type=ref,event=branch

type=sha,prefix={{branch}}-

type=semver,pattern={{version}}

- name: Build and push Docker image

uses: docker/build-push-action@v4

with:

context: .

push: true

tags: ${{ steps.meta.outputs.tags }}

labels: ${{ steps.meta.outputs.labels }}

cache-from: type=registry,ref=${{ env.DOCKER_REGISTRY }}/${{ env.IMAGE_NAME }}:buildcache

cache-to: type=registry,ref=${{ env.DOCKER_REGISTRY }}/${{ env.IMAGE_NAME }}:buildcache,mode=max

# Job 4: Deploy to Staging

deploy-staging:

runs-on: ubuntu-latest

needs: build

environment: staging

steps:

- name: Checkout code

uses: actions/checkout@v3

- name: Configure AWS credentials

uses: aws-actions/configure-aws-credentials@v2

with:

aws-access-key-id: ${{ secrets.AWS_ACCESS_KEY_ID }}

aws-secret-access-key: ${{ secrets.AWS_SECRET_ACCESS_KEY }}

aws-region: ap-south-1

- name: Deploy to ECS

run: |

aws ecs update-service \

--cluster staging-cluster \

--service api-service \

--force-new-deployment

# Job 5: Deploy to Production (Manual Approval)

deploy-production:

runs-on: ubuntu-latest

needs: deploy-staging

environment: production

steps:

- name: Checkout code

uses: actions/checkout@v3

- name: Configure AWS credentials

uses: aws-actions/configure-aws-credentials@v2

with:

aws-access-key-id: ${{ secrets.AWS_ACCESS_KEY_ID }}

aws-secret-access-key: ${{ secrets.AWS_SECRET_ACCESS_KEY }}

aws-region: ap-south-1

- name: Deploy to ECS Production

run: |

aws ecs update-service \

--cluster production-cluster \

--service api-service \

--force-new-deployment

- name: Notify deployment

uses: 8398a7/action-slack@v3

with:

status: ${{ job.status }}

text: 'Production deployment completed! 🚀'

webhook_url: ${{ secrets.SLACK_WEBHOOK }}Key Components Explained

1. Multi-stage Testing: The pipeline runs linting, unit tests, and integration tests before building. This catches issues early.

2. Security Scanning: Automated vulnerability scanning with Snyk and npm audit ensures dependencies are secure.

3. Docker Layer Caching: Using cache-from and cache-to dramatically speeds up builds by reusing unchanged layers.

4. Environment Separation: Staging and production environments are isolated with manual approval gates for production deployments.

5. Observability: Slack notifications keep the team informed about deployment status.

Containerization with Docker

Docker ensures consistency across environments. Here's a production-optimized Dockerfile:

Multi-stage Dockerfile

# Stage 1: Dependencies

FROM node:18-alpine AS dependencies

WORKDIR /app

# Copy package files

COPY package*.json ./

# Install production dependencies only

RUN npm ci --only=production && \

npm cache clean --force

# Stage 2: Build

FROM node:18-alpine AS builder

WORKDIR /app

# Copy package files

COPY package*.json ./

# Install all dependencies (including dev)

RUN npm ci

# Copy source code

COPY . .

# Build the application

RUN npm run build

# Stage 3: Production

FROM node:18-alpine AS production

# Create non-root user

RUN addgroup -g 1001 -S nodejs && \

adduser -S nodejs -u 1001

WORKDIR /app

# Copy production dependencies from dependencies stage

COPY --from=dependencies --chown=nodejs:nodejs /app/node_modules ./node_modules

# Copy built application from builder stage

COPY --from=builder --chown=nodejs:nodejs /app/dist ./dist

COPY --from=builder --chown=nodejs:nodejs /app/package*.json ./

# Set environment variables

ENV NODE_ENV=production \

PORT=3000

# Switch to non-root user

USER nodejs

# Expose port

EXPOSE 3000

# Health check

HEALTHCHECK --interval=30s --timeout=3s --start-period=40s --retries=3 \

CMD node -e "require('http').get('http://localhost:3000/health', (res) => { process.exit(res.statusCode === 200 ? 0 : 1) })"

# Start application

CMD ["node", "dist/index.js"]Docker Compose for Local Development

version: '3.8'

services:

# Application Service

app:

build:

context: .

dockerfile: Dockerfile

target: production

ports:

- "3000:3000"

environment:

- NODE_ENV=development

- DATABASE_URL=postgresql://postgres:password@postgres:5432/myapp

- REDIS_URL=redis://redis:6379

depends_on:

postgres:

condition: service_healthy

redis:

condition: service_healthy

volumes:

- ./src:/app/src

- ./logs:/app/logs

networks:

- app-network

restart: unless-stopped

# PostgreSQL Database

postgres:

image: postgres:15-alpine

environment:

- POSTGRES_USER=postgres

- POSTGRES_PASSWORD=password

- POSTGRES_DB=myapp

ports:

- "5432:5432"

volumes:

- postgres-data:/var/lib/postgresql/data

- ./scripts/init.sql:/docker-entrypoint-initdb.d/init.sql

healthcheck:

test: ["CMD-SHELL", "pg_isready -U postgres"]

interval: 10s

timeout: 5s

retries: 5

networks:

- app-network

# Redis Cache

redis:

image: redis:7-alpine

ports:

- "6379:6379"

volumes:

- redis-data:/data

healthcheck:

test: ["CMD", "redis-cli", "ping"]

interval: 10s

timeout: 3s

retries: 5

networks:

- app-network

# Nginx Reverse Proxy

nginx:

image: nginx:alpine

ports:

- "80:80"

- "443:443"

volumes:

- ./nginx/nginx.conf:/etc/nginx/nginx.conf:ro

- ./nginx/ssl:/etc/nginx/ssl:ro

depends_on:

- app

networks:

- app-network

restart: unless-stopped

volumes:

postgres-data:

redis-data:

networks:

app-network:

driver: bridgeOrchestration with Kubernetes

Kubernetes provides powerful orchestration for containerized applications. Here's a complete deployment setup:

Kubernetes Deployment Manifest

# namespace.yaml

apiVersion: v1

kind: Namespace

metadata:

name: production

labels:

env: production

---

# configmap.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: app-config

namespace: production

data:

NODE_ENV: "production"

LOG_LEVEL: "info"

API_VERSION: "v1"

---

# secret.yaml (Base64 encoded values)

apiVersion: v1

kind: Secret

metadata:

name: app-secrets

namespace: production

type: Opaque

data:

DATABASE_URL: cG9zdGdyZXNxbDovL3VzZXI6cGFzc0Bwb3N0Z3Jlczoxc==

JWT_SECRET: c3VwZXJzZWNyZXRqd3R0b2tlbg==

REDIS_URL: cmVkaXM6Ly9yZWRpczozNjM3OQ==

---

# deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: api-deployment

namespace: production

labels:

app: api

version: v1

spec:

replicas: 3

revisionHistoryLimit: 5

strategy:

type: RollingUpdate

rollingUpdate:

maxSurge: 1

maxUnavailable: 0

selector:

matchLabels:

app: api

template:

metadata:

labels:

app: api

version: v1

spec:

# Service account for RBAC

serviceAccountName: api-service-account

# Security context

securityContext:

runAsNonRoot: true

runAsUser: 1001

fsGroup: 1001

# Init container for migrations

initContainers:

- name: migration

image: ghcr.io/neerajsde/api:latest

command: ['npm', 'run', 'migrate']

envFrom:

- configMapRef:

name: app-config

- secretRef:

name: app-secrets

# Main application container

containers:

- name: api

image: ghcr.io/neerajsde/api:latest

imagePullPolicy: Always

ports:

- name: http

containerPort: 3000

protocol: TCP

# Environment variables

envFrom:

- configMapRef:

name: app-config

- secretRef:

name: app-secrets

# Resource limits

resources:

requests:

memory: "256Mi"

cpu: "250m"

limits:

memory: "512Mi"

cpu: "500m"

# Liveness probe

livenessProbe:

httpGet:

path: /health

port: http

initialDelaySeconds: 30

periodSeconds: 10

timeoutSeconds: 5

failureThreshold: 3

# Readiness probe

readinessProbe:

httpGet:

path: /ready

port: http

initialDelaySeconds: 10

periodSeconds: 5

timeoutSeconds: 3

failureThreshold: 3

# Startup probe

startupProbe:

httpGet:

path: /health

port: http

initialDelaySeconds: 0

periodSeconds: 5

failureThreshold: 30

# Volume mounts

volumeMounts:

- name: logs

mountPath: /app/logs

# Volumes

volumes:

- name: logs

emptyDir: {}

# Node affinity for zone distribution

affinity:

podAntiAffinity:

preferredDuringSchedulingIgnoredDuringExecution:

- weight: 100

podAffinityTerm:

labelSelector:

matchExpressions:

- key: app

operator: In

values:

- api

topologyKey: kubernetes.io/hostname

---

# service.yaml

apiVersion: v1

kind: Service

metadata:

name: api-service

namespace: production

labels:

app: api

spec:

type: ClusterIP

ports:

- port: 80

targetPort: http

protocol: TCP

name: http

selector:

app: api

---

# hpa.yaml (Horizontal Pod Autoscaler)

apiVersion: autoscaling/v2

kind: HorizontalPodAutoscaler

metadata:

name: api-hpa

namespace: production

spec:

scaleTargetRef:

apiVersion: apps/v1

kind: Deployment

name: api-deployment

minReplicas: 3

maxReplicas: 10

metrics:

- type: Resource

resource:

name: cpu

target:

type: Utilization

averageUtilization: 70

- type: Resource

resource:

name: memory

target:

type: Utilization

averageUtilization: 80

behavior:

scaleUp:

stabilizationWindowSeconds: 60

policies:

- type: Percent

value: 50

periodSeconds: 60

scaleDown:

stabilizationWindowSeconds: 300

policies:

- type: Percent

value: 25

periodSeconds: 60

---

# ingress.yaml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: api-ingress

namespace: production

annotations:

kubernetes.io/ingress.class: nginx

cert-manager.io/cluster-issuer: letsencrypt-prod

nginx.ingress.kubernetes.io/ssl-redirect: "true"

nginx.ingress.kubernetes.io/rate-limit: "100"

spec:

tls:

- hosts:

- api.neerajprajapati.in

secretName: api-tls

rules:

- host: api.neerajprajapati.in

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: api-service

port:

number: 80Deploying to Kubernetes

# Apply all manifests

kubectl apply -f k8s/

# Check deployment status

kubectl rollout status deployment/api-deployment -n production

# Watch pods

kubectl get pods -n production -w

# Check logs

kubectl logs -f deployment/api-deployment -n production

# Scale manually

kubectl scale deployment/api-deployment --replicas=5 -n production

# Rollback if needed

kubectl rollout undo deployment/api-deployment -n productionInfrastructure as Code (IaC)

Using Terraform to provision AWS infrastructure:

Terraform AWS ECS Setup

# main.tf

terraform {

required_version = ">= 1.0"

backend "s3" {

bucket = "terraform-state-neeraj"

key = "production/terraform.tfstate"

region = "ap-south-1"

encrypt = true

dynamodb_table = "terraform-locks"

}

required_providers {

aws = {

source = "hashicorp/aws"

version = "~> 5.0"

}

}

}

provider "aws" {

region = var.aws_region

default_tags {

tags = {

Environment = var.environment

ManagedBy = "Terraform"

Project = "API-Service"

}

}

}

# VPC Configuration

module "vpc" {

source = "terraform-aws-modules/vpc/aws"

name = "${var.environment}-vpc"

cidr = "10.0.0.0/16"

azs = ["ap-south-1a", "ap-south-1b", "ap-south-1c"]

private_subnets = ["10.0.1.0/24", "10.0.2.0/24", "10.0.3.0/24"]

public_subnets = ["10.0.101.0/24", "10.0.102.0/24", "10.0.103.0/24"]

enable_nat_gateway = true

enable_vpn_gateway = false

enable_dns_hostnames = true

enable_dns_support = true

tags = {

Name = "${var.environment}-vpc"

}

}

# ECS Cluster

resource "aws_ecs_cluster" "main" {

name = "${var.environment}-cluster"

setting {

name = "containerInsights"

value = "enabled"

}

tags = {

Name = "${var.environment}-ecs-cluster"

}

}

# ECS Task Definition

resource "aws_ecs_task_definition" "api" {

family = "api-service"

network_mode = "awsvpc"

requires_compatibilities = ["FARGATE"]

cpu = "512"

memory = "1024"

execution_role_arn = aws_iam_role.ecs_execution_role.arn

task_role_arn = aws_iam_role.ecs_task_role.arn

container_definitions = jsonencode([

{

name = "api"

image = "${var.ecr_repository_url}:latest"

essential = true

portMappings = [

{

containerPort = 3000

protocol = "tcp"

}

]

environment = [

{

name = "NODE_ENV"

value = var.environment

},

{

name = "PORT"

value = "3000"

}

]

secrets = [

{

name = "DATABASE_URL"

valueFrom = aws_secretsmanager_secret.db_url.arn

},

{

name = "JWT_SECRET"

valueFrom = aws_secretsmanager_secret.jwt_secret.arn

}

]

logConfiguration = {

logDriver = "awslogs"

options = {

"awslogs-group" = aws_cloudwatch_log_group.api.name

"awslogs-region" = var.aws_region

"awslogs-stream-prefix" = "api"

}

}

healthCheck = {

command = ["CMD-SHELL", "curl -f http://localhost:3000/health || exit 1"]

interval = 30

timeout = 5

retries = 3

startPeriod = 60

}

}

])

}

# ECS Service

resource "aws_ecs_service" "api" {

name = "api-service"

cluster = aws_ecs_cluster.main.id

task_definition = aws_ecs_task_definition.api.arn

desired_count = var.desired_count

launch_type = "FARGATE"

network_configuration {

subnets = module.vpc.private_subnets

security_groups = [aws_security_group.ecs_tasks.id]

assign_public_ip = false

}

load_balancer {

target_group_arn = aws_lb_target_group.api.arn

container_name = "api"

container_port = 3000

}

deployment_configuration {

maximum_percent = 200

minimum_healthy_percent = 100

}

depends_on = [aws_lb_listener.api]

}

# Application Load Balancer

resource "aws_lb" "api" {

name = "${var.environment}-api-alb"

internal = false

load_balancer_type = "application"

security_groups = [aws_security_group.alb.id]

subnets = module.vpc.public_subnets

enable_deletion_protection = true

enable_http2 = true

tags = {

Name = "${var.environment}-api-alb"

}

}

# Target Group

resource "aws_lb_target_group" "api" {

name = "${var.environment}-api-tg"

port = 3000

protocol = "HTTP"

vpc_id = module.vpc.vpc_id

target_type = "ip"

health_check {

enabled = true

healthy_threshold = 2

unhealthy_threshold = 3

timeout = 5

interval = 30

path = "/health"

matcher = "200"

}

deregistration_delay = 30

}

# Auto Scaling

resource "aws_appautoscaling_target" "ecs_target" {

max_capacity = 10

min_capacity = 2

resource_id = "service/${aws_ecs_cluster.main.name}/${aws_ecs_service.api.name}"

scalable_dimension = "ecs:service:DesiredCount"

service_namespace = "ecs"

}

resource "aws_appautoscaling_policy" "ecs_cpu_policy" {

name = "cpu-autoscaling"

policy_type = "TargetTrackingScaling"

resource_id = aws_appautoscaling_target.ecs_target.resource_id

scalable_dimension = aws_appautoscaling_target.ecs_target.scalable_dimension

service_namespace = aws_appautoscaling_target.ecs_target.service_namespace

target_tracking_scaling_policy_configuration {

predefined_metric_specification {

predefined_metric_type = "ECSServiceAverageCPUUtilization"

}

target_value = 70.0

scale_in_cooldown = 300

scale_out_cooldown = 60

}

}Monitoring and Observability

Comprehensive monitoring setup with Prometheus and Grafana:

Prometheus Configuration

# prometheus.yml

global:

scrape_interval: 15s

evaluation_interval: 15s

external_labels:

cluster: 'production'

environment: 'production'

# Alertmanager configuration

alerting:

alertmanagers:

- static_configs:

- targets:

- alertmanager:9093

# Load rules once and periodically evaluate them

rule_files:

- "alerts/*.yml"

# Scrape configurations

scrape_configs:

# Prometheus itself

- job_name: 'prometheus'

static_configs:

- targets: ['localhost:9090']

# Node exporter

- job_name: 'node'

static_configs:

- targets: ['node-exporter:9100']

# Application metrics

- job_name: 'api'

kubernetes_sd_configs:

- role: pod

namespaces:

names:

- production

relabel_configs:

- source_labels: [__meta_kubernetes_pod_label_app]

action: keep

regex: api

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: pod_name

- source_labels: [__address__]

action: replace

regex: ([^:]+)(?::\d+)?

replacement: ${1}:3000

target_label: __address__Application Metrics (Node.js)

// metrics.js

const client = require('prom-client');

const express = require('express');

// Create a Registry

const register = new client.Registry();

// Add default metrics

client.collectDefaultMetrics({ register });

// Custom metrics

const httpRequestDuration = new client.Histogram({

name: 'http_request_duration_seconds',

help: 'Duration of HTTP requests in seconds',

labelNames: ['method', 'route', 'status_code'],

buckets: [0.1, 0.5, 1, 2, 5, 10]

});

const httpRequestTotal = new client.Counter({

name: 'http_requests_total',

help: 'Total number of HTTP requests',

labelNames: ['method', 'route', 'status_code']

});

const activeConnections = new client.Gauge({

name: 'active_connections',

help: 'Number of active connections'

});

const databaseQueryDuration = new client.Histogram({

name: 'database_query_duration_seconds',

help: 'Duration of database queries in seconds',

labelNames: ['operation', 'table'],

buckets: [0.01, 0.05, 0.1, 0.5, 1, 2]

});

// Register metrics

register.registerMetric(httpRequestDuration);

register.registerMetric(httpRequestTotal);

register.registerMetric(activeConnections);

register.registerMetric(databaseQueryDuration);

// Middleware to track metrics

const metricsMiddleware = (req, res, next) => {

const start = Date.now();

res.on('finish', () => {

const duration = (Date.now() - start) / 1000;

const route = req.route ? req.route.path : req.path;

httpRequestDuration

.labels(req.method, route, res.statusCode)

.observe(duration);

httpRequestTotal

.labels(req.method, route, res.statusCode)

.inc();

});

next();

};

// Metrics endpoint

const metricsRouter = express.Router();

metricsRouter.get('/metrics', async (req, res) => {

res.set('Content-Type', register.contentType);

res.end(await register.metrics());

});

module.exports = {

metricsMiddleware,

metricsRouter,

httpRequestDuration,

activeConnections,

databaseQueryDuration

};Security Best Practices

1. Secret Management

# Using AWS Secrets Manager with ECS

{

"containerDefinitions": [{

"secrets": [

{

"name": "DATABASE_URL",

"valueFrom": "arn:aws:secretsmanager:ap-south-1:123456789:secret:prod/db-url"

}

]

}]

}2. Network Policies (Kubernetes)

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: api-network-policy

namespace: production

spec:

podSelector:

matchLabels:

app: api

policyTypes:

- Ingress

- Egress

ingress:

- from:

- namespaceSelector:

matchLabels:

name: production

- podSelector:

matchLabels:

role: ingress

ports:

- protocol: TCP

port: 3000

egress:

- to:

- podSelector:

matchLabels:

app: postgres

ports:

- protocol: TCP

port: 5432

- to:

- podSelector:

matchLabels:

app: redis

ports:

- protocol: TCP

port: 63793. Security Scanning in CI/CD

# Trivy container scanning

- name: Run Trivy vulnerability scanner

uses: aquasecurity/trivy-action@master

with:

image-ref: 'ghcr.io/neerajsde/api:latest'

format: 'sarif'

output: 'trivy-results.sarif'

severity: 'CRITICAL,HIGH'

- name: Upload Trivy results to GitHub Security

uses: github/codeql-action/upload-sarif@v2

with:

sarif_file: 'trivy-results.sarif'Real-World Implementation

Let's put it all together with a complete deployment workflow:

Deployment Script

#!/bin/bash

# deploy.sh

set -e

# Colors for output

RED='\033[0;31m'

GREEN='\033[0;32m'

YELLOW='\033[1;33m'

NC='\033[0m' # No Color

# Variables

ENVIRONMENT=${1:-staging}

VERSION=${2:-latest}

CLUSTER_NAME="${ENVIRONMENT}-cluster"

SERVICE_NAME="api-service"

REGION="ap-south-1"

echo -e "${GREEN}Starting deployment to ${ENVIRONMENT}...${NC}"

# Step 1: Run tests

echo -e "${YELLOW}Running tests...${NC}"

npm run test:all

if [ $? -ne 0 ]; then

echo -e "${RED}Tests failed! Aborting deployment.${NC}"

exit 1

fi

# Step 2: Build Docker image

echo -e "${YELLOW}Building Docker image...${NC}"

docker build -t api:${VERSION} .

# Step 3: Security scan

echo -e "${YELLOW}Running security scan...${NC}"

trivy image --severity HIGH,CRITICAL api:${VERSION}

if [ $? -ne 0 ]; then

echo -e "${RED}Security vulnerabilities found! Review and fix before deploying.${NC}"

exit 1

fi

# Step 4: Tag and push to ECR

echo -e "${YELLOW}Pushing to ECR...${NC}"

aws ecr get-login-password --region ${REGION} | docker login --username AWS --password-stdin ${ECR_REGISTRY}

docker tag api:${VERSION} ${ECR_REGISTRY}/api:${VERSION}

docker push ${ECR_REGISTRY}/api:${VERSION}

# Step 5: Update ECS service

echo -e "${YELLOW}Updating ECS service...${NC}"

aws ecs update-service \

--cluster ${CLUSTER_NAME} \

--service ${SERVICE_NAME} \

--force-new-deployment \

--region ${REGION}

# Step 6: Wait for deployment to complete

echo -e "${YELLOW}Waiting for deployment to stabilize...${NC}"

aws ecs wait services-stable \

--cluster ${CLUSTER_NAME} \

--services ${SERVICE_NAME} \

--region ${REGION}

# Step 7: Health check

echo -e "${YELLOW}Running health checks...${NC}"

HEALTH_URL="https://api.neerajprajapati.in/health"

MAX_RETRIES=10

RETRY_COUNT=0

while [ $RETRY_COUNT -lt $MAX_RETRIES ]; do

HTTP_CODE=$(curl -s -o /dev/null -w "%{http_code}" ${HEALTH_URL})

if [ $HTTP_CODE -eq 200 ]; then

echo -e "${GREEN}Health check passed!${NC}"

break

fi

RETRY_COUNT=$((RETRY_COUNT+1))

echo "Health check attempt ${RETRY_COUNT}/${MAX_RETRIES} failed. Retrying in 10 seconds..."

sleep 10

done

if [ $RETRY_COUNT -eq $MAX_RETRIES ]; then

echo -e "${RED}Health check failed after ${MAX_RETRIES} attempts. Rolling back...${NC}"

# Rollback logic here

exit 1

fi

# Step 8: Run smoke tests

echo -e "${YELLOW}Running smoke tests...${NC}"

npm run test:smoke -- --env=${ENVIRONMENT}

echo -e "${GREEN}Deployment completed successfully! 🚀${NC}"

echo -e "Version: ${VERSION}"

echo -e "Environment: ${ENVIRONMENT}"

echo -e "Service: ${SERVICE_NAME}"Conclusion

Building a robust DevOps pipeline requires careful consideration of:

- Automation: Every manual step is an opportunity for error

- Security: Bake security into every layer

- Observability: You can't fix what you can't see

- Resilience: Plan for failure at every level

- Scalability: Design for growth from day one

The tools and practices we've covered form the foundation of modern DevOps. Start small, automate incrementally, and continuously improve your pipeline based on real-world feedback.

Remember: DevOps is a journey, not a destination. Keep iterating, keep learning, and keep shipping! 🚀

Additional Resources

- The Twelve-Factor App

- Kubernetes Documentation

- Docker Best Practices

- AWS Well-Architected Framework

- Prometheus Best Practices

About the Author: Neeraj Prajapati is a Senior Backend Developer specializing in scalable system design, cloud architecture, and DevOps practices. Connect on LinkedIn or GitHub.